|

Subscribe / Renew |

|

|

Contact Us |

|

| ► Subscribe to our Free Weekly Newsletter | |

| home | Welcome, sign in or click here to subscribe. | login |

Environment

| |

|

February 23, 2012

Putting power-hungry data centers on a diet

Sparling

Janof

|

If your company is like most, it is throwing money away in its data center or main server room.

These rooms, which are the operational nerve center of most organizations, house electronic equipment that provide data storage and run email, accounting software, phone systems and other key programs. These vital spaces are also energy hogs that can significantly affect a company’s bottom line.

IBM recently discovered that the energy consumed by its data centers companywide accounted for 45 percent of its energy use, despite the fact that its data centers fill only 6 percent of its physical space. This may come as a shock to some, but it is actually quite common in the corporate sector. With data centers having as much as 40 times the energy density of a standard office space, loads add up fast, as do energy bills.

This phenomenon has caught the attention of CFOs around the world, who are watching their energy costs rise, not only because of increasing utility rates, but because their IT systems are consuming more and more power every year.

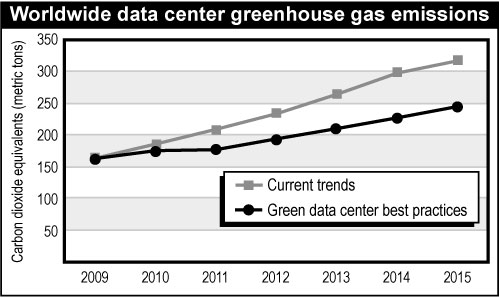

In an effort to maintain a competitive edge, companies are looking for ways to streamline their operations, including increasing energy efficiency in their data centers. Data center “greening” is gaining traction.

Not so long ago, there was typically a chasm between a company’s IT department and its facilities department. IT departments were basically given carte blanche to do whatever they deemed necessary, and facilities departments had to scramble to accommodate them.

If IT said it needed to double its overall power consumption, nobody questioned it. If IT required that server room temperatures be kept to a maximum of 68 degrees, nobody dared ask why, for fear of damaging those very high cost “black boxes.”

But times have changed. Traditional assumptions are being scrutinized in an effort to trim costs wisely.

Saving server power

There are two basic components to energy consumption in a data center: server power and air conditioning power. The technology industry has put both elements under the microscope and has discovered that there are significant opportunities for energy savings in each. This system-level reevaluation is being led by global technology titans such as Microsoft, Facebook, Amazon and Google.

On the server power side, “virtualization” is a technique for reducing energy consumption that makes an immediate and measurable impact on reducing IT energy consumption.

This is a process in which a single server is used for multiple applications, rather than having one server for each. Not only are there fewer servers being powered, but the ones that remain are operating more in their energy-consumption “sweet spot.”

Virtualization, along with purchasing the ever-growing list of Energy Star-listed IT equipment, will reap financial savings for the life of the system.

One can also look at protecting IT with more efficient upstream equipment, such as an uninterruptible power supply, or UPS. A UPS’s typical function is to provide power transfer seamlessly to battery backup while waiting for a generator to start and re-energize the system, or at least to provide enough time for the IT department to do an orderly shutdown.

If your UPS is reaching the end of its usable life, consider replacing it with a system that has an ultra-high-efficiency mode, which increases a UPS’s efficiency to up to 99 percent. If you have a newer UPS now, it may already have this feature and it simply needs to be activated.

Keeping cool for less

Air-conditioning power provides one of the greatest opportunities for energy savings. A rule of thumb used to be that it took as much energy to cool a data center as it took to power the electronics within — cooling watts equals server watts.

This was largely driven by a myth that IT rooms need to be held at 68 degrees. Look at the manufacturer’s specifications for IT equipment and you will see that most are now rated to operate in temperatures of 104 degrees and higher.

It turns out that IT equipment is not as fragile as it used to be, which was a eureka moment for the IT industry. By raising the maximum temperature, some data centers, such as Facebook’s system in Prineville, Ore., have reduced cooling energy such that cooling watts is only 10 percent of server watts.

Another energy savings strategy is to keep the hot air blown out of the servers away from the cool air blown out of the air-conditioning equipment. Known as “hot aisle/cold aisle containment,” this technique increases efficiency by preventing hot and cold air from mixing, which eases the strain on the air-conditioning system. This may require that servers be rotated within their cabinets so that hot air from one server isn’t being blown into the cold-air intake of a server across a shared aisle.

This is not a call for companies to immediately raise the temperature to 104 degrees. Since IT equipment is still expensive, one doesn’t want to push one’s luck.

A generally accepted temperature limit is 80 to 84 degrees. At roughly 85 degrees, IT equipment internal fans automatically switch to a higher speed, which erodes energy savings significantly.

We in the Northwest live in a climate that affords companies the opportunity to take advantage of our naturally temperate outside air temperatures for cooling IT rooms, instead of depending on standard high-cost, high-energy air conditioning.

In a recent project it was determined that outside air using standard air-handling units would provide adequate cooling for all but six days per year. Chillers would only need to be energized 2 percent of the time, which would result in a substantial energy savings.

The next time you are thinking of replacing your computer room air-conditioning unit, consider whether your dollars would be better spent on installing an outside-air system that will provide a much more attractive return on investment.

Next steps

The time has come for us to follow the lead of companies like Google, who strive for creative ways to reduce energy consumption.

Google recently repurposed an abandoned paper mill in Hamina, Finland, for one of its massive computing facilities. The mill included an underground tunnel that was once used to draw frigid water from the Baltic Sea to cool a steam-generation plant. Google now uses the same tunnel to provide water cooling for its data center via heat exchangers.

We do not mean to suggest that companies should immediately start remodeling their data centers, though virtualization is a least-cost first step.

As server equipment wears out, consider whether you need to buy a new one or whether you can transfer its function to one of your remaining servers. If you need to purchase new server equipment, look at their efficiency specs and look for the Energy Star label.

As power-distribution or air-conditioning equipment fails, consider alternative technologies whose return on investment will make the decision a no-brainer when energy savings is considered. Saving energy is something that all companies can do to improve their financial bottom lines without affecting functionality, and it happens to be good for the planet as well.

Tim Janof is a principal at Sparling.

Other Stories:

- Does a green retrofit make sense for your building?

- LEED platinum house: green from start to finish

- City expands its goals for green with 2030 District

- Which green certification program is right for you?

- 'Living buildings': What we’ve learned so far

- How we earned LEED platinum without blowing our budget

- ‘Living streets’ aren’t just for drivers

- Your projects can still be green, even if LEED doesn't fit

- Live, work and farm at Ballard's Greenfire

- Workers prefer their office makeovers green